The Wiimote and transitional space

Interactive Design, Wii,

7/31/07

Wired recently featured a piece about new work being done using the Wiimote as an interface for real-world training simulators running in Second Life. Surgery, hazardous chemical handling, nuclear plant operations—all are fair game for WorldWired, a consultancy run by David E. Stone, who calls the Wiimote “one of the most significant technology breakthroughs in the history of computer science.”

Well, obviously I think the Wiimote’s pretty nifty too, but it’s only partly for the reasons given by MIT professor Eric Klopfer in the article: “People know intuitively what to do with it when they pick it up because we use it like devices we are familiar with—bats, rackets, wands, etc.”

So much Wiimote boosterism is about where the remote takes you—the mental models it so seamlessly helps you to adopt. All true, but I would argue that of equal significance is what the remote helps you leave behind. Imagine that the Wiimote comes into widespread usage as an alternative PC input device. Not something you use every day, but something you keep next to your work machine, since you can use it as a PowerPoint or iTunes remote, as well as for those all-too-rare moments when you stumble across a Flash game, online comic or art piece that shows you the delightful message: “If you have a Wiimote, pick it up now.”

Instantly, you’ve left behind the world of work and its input devices, and you’re prepared to experience something unusual—the more unexpected, the better. Even if the piece doesn’t make use of the remote’s motion sensitivity at all, the device itself has still managed to carve out headspace where software art and entertainment no longer have to compete with every other application to remap the meanings of your mouse and keyboard. We’ve got transitional space now; we’ve got a lobby to ease people out of the workaday and into alternate realms.

Calling it a technological breakthrough just doesn’t do it justice, does it?

Shining Flower: an appreciation

Exemplary Work,

7/27/07

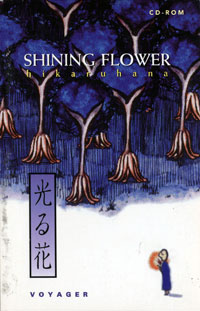

Pictured at right: the cover of one of my all-time favorite digital experiences, the almost completely non-interactive Shining Flower (aka Hikaru Hana), developed by Maze Inc. and published by The Voyager Company, with concept and illustrations by Kikuko Iwano. My niece (those are her cornrows you see at the bottom of every page) recently returned to me the Power Macintosh 8500/120 I lent her when she went to college, and with it I regained the ability to run Shining Flower, to my delight.

Shining Flower was published in 1993 while I was working at Voyager as an audio commentary editor for the Criterion Collection. I have vague memories of seeing it demoed at one of the monthly open houses Voyager held at their offices on the beach at Santa Monica. Love at first sight; that immediate feeling of creative jealousy you get when you see something you wish you’d made. I bought it.

Shining Flower is beautiful, contemplative, quiet, and makes excellent use of limited resources. It’s not pretending to be a movie, or cel animation, or anything other than an 8-bit Director piece (with exemplary use of the lost art of color cycling, I might add). A single character holding a glowing flower makes his/her way through a series of surreal vignettes, on a kind of spiritual journey. Interactivity is limited to basically choosing which several-minute-long sequence you want to watch next.

I remember some grumbling at Voyager about the lack of interaction; at a company which was pioneering the application of same to content of cultural significance, why publish this? On the surface, it did appear to be a misstep, but if you caught the spirit behind this piece—the total commitment to expressing something in this medium, approaching it with the same respect afforded to cinema or literature, fully embracing the technology of the day without harboring self-defeating disdain for its limitations—the appeal of the work was undeniable.

Now when I watch it I find myself wanting to write code that makes it all dynamic, semantic, syntactic and syllabic…

Enjoy: the “beach” vignette of Shining Flower.

Swinging with the London Flash Platform User Group

Announcements, Events, Flash, Wii,

7/25/07

Word comes from across the pond that Swing is going to be shown at tomorrow’s London Flash Platform User Group meeting. At a one-hour session called “Fwii Style” (all these Wii puns remind me of the early days of the Macintosh, when all software had to have “Mac” in the title) Adam Robertson of Dusty Pixels will hold forth on the wonders of Wii and Flash:

Forget your PS3’s and 360’s, the Wii is officially the coolest console ever, all thanks to its innovative Wiimote controller. And now you can get in on the motion sensing goodness using Flash.

In this session we’ll take a quick look inside the Wiimote to learn a bit about how it works, then discover how you can use it to control your own Flash projects, both through the official Wii browser (with the Wiicade API) and on your desktop (with FWiidom & WiiFlash). Much arm waving guaranteed.

The followup session, called “Make Things Physical” and taught by Leif Lovgreen, sounds pretty great too:

An introduction to physical interaction. Adobe Flash, the Make Controller Kit from MakingThings and a handful of analogue sensors. This session covers the basics of getting started with analogue input as an interface to Flash.

Expect strange things like ice cubes, food, flashlights and a boxing ball to be natural ingredients in this session.

Interaction with analog sensors was something I thought was still beyond the capabilities of Flash; glad to hear this barrier’s coming down.

Those in London environs, take note; sounds like an interesting evening.

Recent Posts

Go InSight: Composing a Musical Summation of Every Mission to Mars (Part 2)

Making music out of the data of interplanetary exploration.

Go InSight: Composing a musical summation of every mission to Mars (Part 1)

Making music out of the data of interplanetary exploration.

Cited Works from “Storytelling in the Age of Divided Screens”

Here’s a list of links to works cited in my recent talk “Storytelling in the Age of Divided Screens” at Gallaudet University.

Timeframing: The Art of Comics on Screens

I’m very happy to announce the launch of “Timeframing: The Art of Comics on Screens,” a new website that explores what comics have to teach us about creative communication in the age of screen media.

The prototype that led to Upgrade Soul

To celebrate the launch of Upgrade Soul, here’s a screen shot of an eleven year old prototype I made that sets artwork from Will Eisner’s “The Treasure of Avenue ‘C’” (a story from New York: The Big City) in two dynamically resizable panels.

Categories

Algorithms

Animation

Announcements

Authoring Tools

Comics

Digital Humanities

Electronic Literature

Events

Experiments

Exemplary Work

Flash

Flex

Fun

Games

Graphic Design

Interactive Design

iPhone

jQuery

LA Flash

Miscellaneous

Music

Opertoon

Remembrances

Source Code

Typography

User Experience

Viewfinder

Wii

Archives

July 2018

May 2018

February 2015

October 2014

October 2012

February 2012

January 2012

January 2011

April 2010

March 2010

October 2009

February 2009

January 2009

December 2008

September 2008

July 2008

June 2008

April 2008

March 2008

February 2008

January 2008

November 2007

October 2007

September 2007

August 2007

July 2007

June 2007